Image: Shutterstock

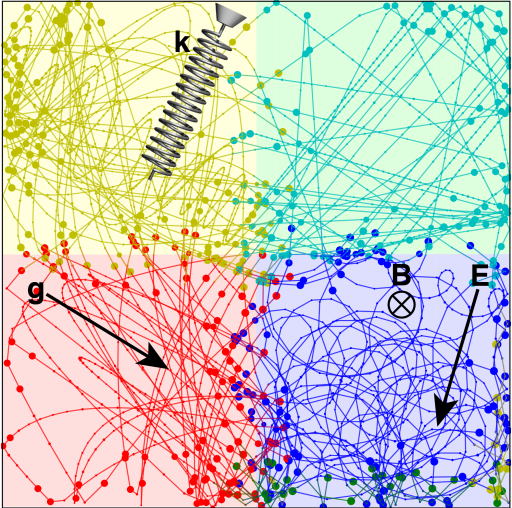

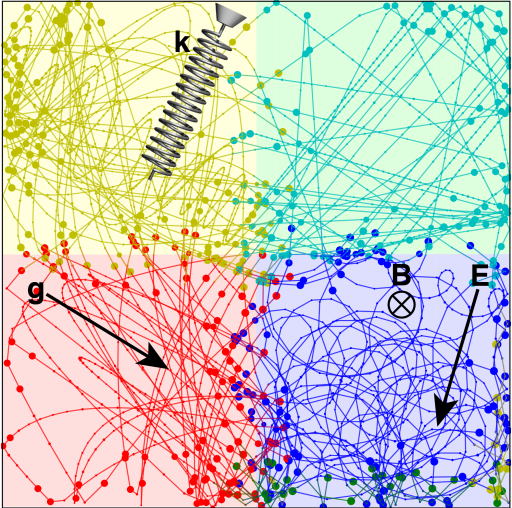

Two researchers from MIT have created an “AI physicist” that is able to generate theories about the physical laws of imaginary universes. It marks a major step toward creating machine learning algorithms that are capable of not just finding patterns, but extrapolating from those patterns to make predictions about the future. This would lay the foundation for scientific discoveries made entirely by artificial intelligences.When an AI is given a data set, it analyzes this data set to create a model. The nature of this model will depend on the task. For instance, if I wanted to train an AI to recognize a cat, I could feed it thousands of cat pictures so that the algorithm could generalize from similar features found in each photo to create a model of a cat.The way artificial intelligences create models is similar to the way scientists use theories to generalize from a particular instance of a phenomenon to all instances of that phenomenon in similar contexts. There’s one crucial difference, however.Teaching AI how to partition data to create small models that can be added together to create larger models has proven to be remarkably challenging for machine learning researchers. As detailed in a paper posted to arXiv last week, however, Tailin Wu and Max Tegmark, two physicists from MIT, have made a major step in that direction with their “AI physicist.”To make this happen, Tegmark and Wu endowed their machine learning algorithm with four strategies that are also employed by human scientists so that it could generate theories about complex observations. These strategies were divide-and-conquer (generate multiple theories, each of which fits only a part of the data), Occam’s razor (use the simplest possible theory), unification (combine the theories) and “lifelong learning” (try applying the theories to future problems).After these strategies were coded into the machine learning algorithm, Tegmark and Wu presented it with a series of increasingly complex virtual environments governed by strange physical laws and tasked the AI with making sense of it. In particular, the goal of the AI was to predict the motion of an object in two-dimensions as accurately as possible. This would require the AI to generate unique physical theories for each “mystery environment” to understand how an object would move in that environment. As Tegmark and Wu discovered, the AI physicist has an increasingly hard time understanding the laws of physics as the environments become more complicated. All told, the AI physicist was exposed to 40 different mystery environments and was able to generate correct theories about the physical laws that governed them in over 90 percent of the cases. Moreover, Tegmark and Wu’s AI physicist was able to reduce prediction errors a “billionfold” over conventional machine learning algorithms.This work may have big consequences for the way humans do science in the future. In particular, it may be especially useful for understanding massively complex datasets, such as those used in climate modeling or economics. Indeed, the world’s next Newton or Einstein may just be some computer code.

As Tegmark and Wu discovered, the AI physicist has an increasingly hard time understanding the laws of physics as the environments become more complicated. All told, the AI physicist was exposed to 40 different mystery environments and was able to generate correct theories about the physical laws that governed them in over 90 percent of the cases. Moreover, Tegmark and Wu’s AI physicist was able to reduce prediction errors a “billionfold” over conventional machine learning algorithms.This work may have big consequences for the way humans do science in the future. In particular, it may be especially useful for understanding massively complex datasets, such as those used in climate modeling or economics. Indeed, the world’s next Newton or Einstein may just be some computer code.

Advertisement

In the example above, the AI was fed pictures that already focused on the cat. A much harder task, and one analogous to the process of doing science, would be to feed the AI pictures of cats in similar environments, say, for example, a forest. With this dataset, an AI tasked with creating a model of a cat would have to ignore irrelevant details (such as all the plants) and only focus on the cat. Alternatively, it might arrive at a model that depicts all cats as living in forests. If you then fed the AI a picture of a cat asleep on your bed, it would fail to recognize it because its model was faulty.Although the AI wasn’t entirely wrong—there are a number of cat species that live exclusively in forests—it made the mistake of creating one big model and attempting to fit that model to all the data. A more fruitful approach, and the one employed by scientists, is to create small models or theories that apply to subsets of observational data and then adding these small theories together until you hopefully arrive at a “theory of everything.”Read More: Researchers Made Quantum Artificial Life for the First Time

Advertisement