Image via Shutterstock

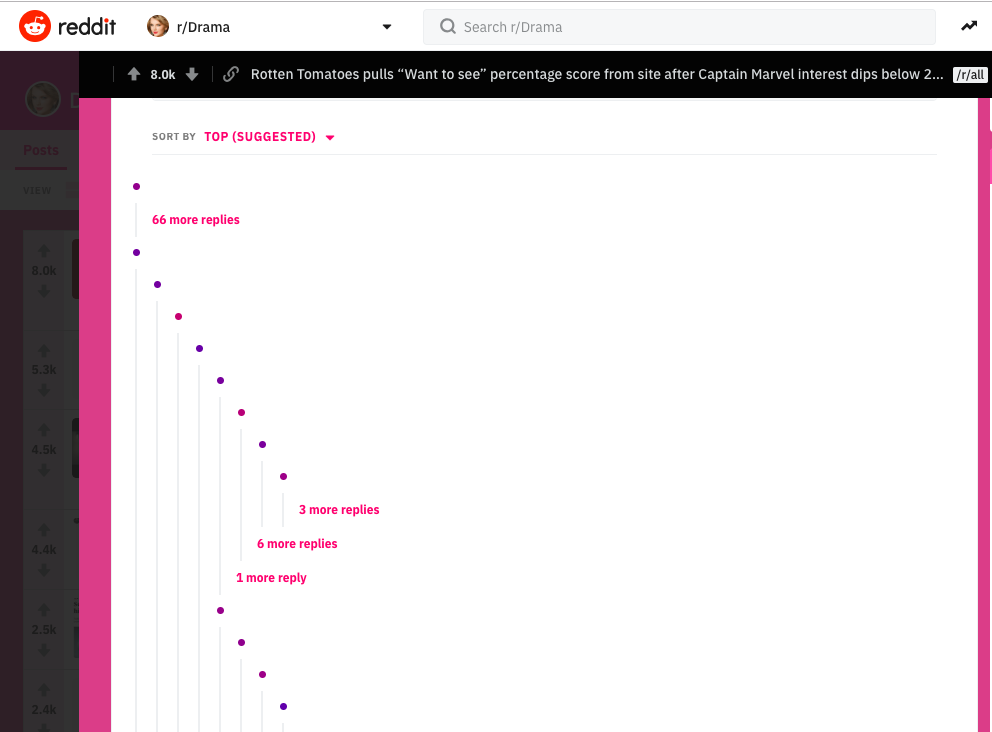

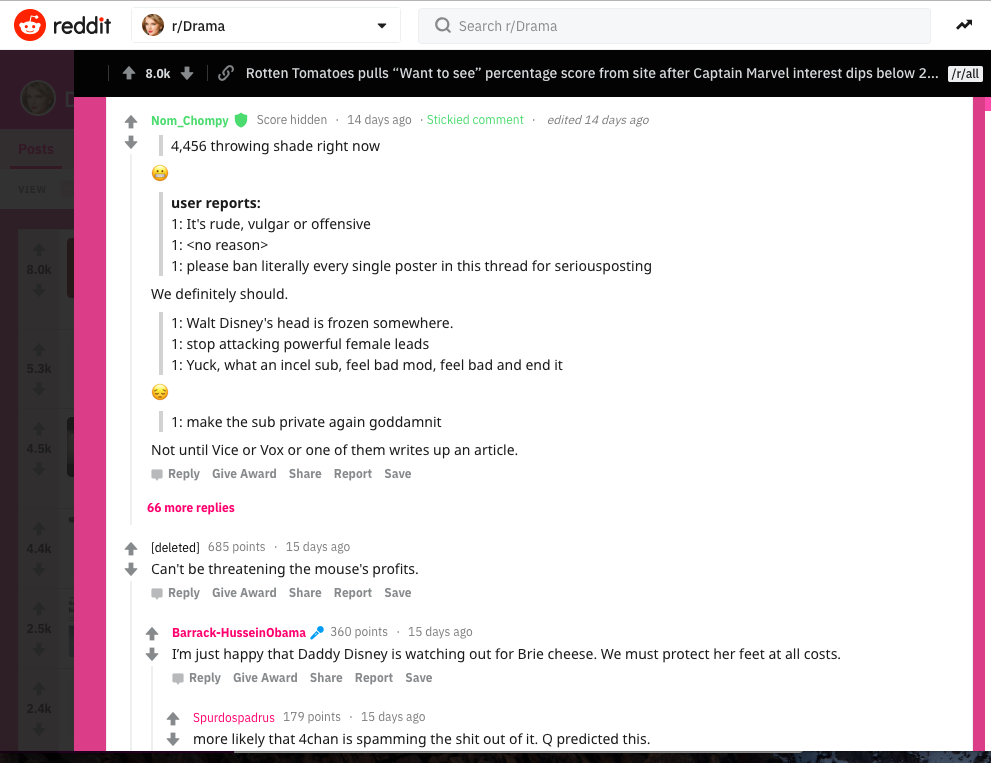

As someone who spends a lot of time letting the full, slimey brunt of the internet wash over me like a sewage wave, the thought that I might be able to quiet comment sections and replies based on their toxicity is an appealing one.Tune is a Chrome extension that does just that: Using a dial, you can “turn down the volume” of comments on social media platforms including Twitter, Reddit, Facebook, and YouTube. The dial has settings from “quiet” all the way up to “blaring,” depending on how aggressive you want the content filter to be.Tune was created by Jigsaw, a technology incubator that was formerly known as Google Ideas but is now a subsidiary of Alphabet, Inc. To weed out toxic comments, the extension uses Perspective, an API that uses machine learning models to score text for toxicity. While Perspective is meant for people moderating or writing comments, Tune is meant for the rest of us—the lurkers.“Tune lets you turn the volume of toxic comments down for ‘zen mode’ to skip comments completely, or turn it up to see everything — even the mean stuff,” Tune product manager CJ Adams wrote in a blog post about the extension. “Or you can set the volume somewhere in between to customize the level of toxicity (e.g. attacks, insults, profanity, etc) you’re willing to see in comments.”Online commenting is a broken mess largely because of algorithmic moderation. We see it in how Facebook’s algorithm surfaces (or doesn’t surface) stories in the news feed, and in how Tumblr relied on an algorithm to catch NSFW blogs last year with mixed results.Can another algorithm adequately catch—let alone intelligently define—what is toxic speech and what isn’t? To find out, I set Tune to “quiet” and took it for a spin around some of the most volatile spaces on Reddit.Tune had plenty to work with on r/Drama, a subreddit self-described as “one of the most malevolent, cruel, coldhearted online communities you'll ever find.” The extension almost entirely redacted a thread about Rotten Tomatoes pulling the “want to see” pre-release rating feature after Captain Marvel was review-bombed by people giving it negative ratings. So, Tune succeeded in hiding comments, but was it hiding the right comments, the truly toxic ones? Turned back up to “blaring,” the redacted comments are pretty run-of-the-mill for r/Drama (mildly “toxic” for the subreddit), but Tune’s content filter seems to dislike curse words and slurs, especially—which a lot of comments on the subreddit contain. A lot of the comments that Tune blocked weren’t necessarily toxic, just sweary.

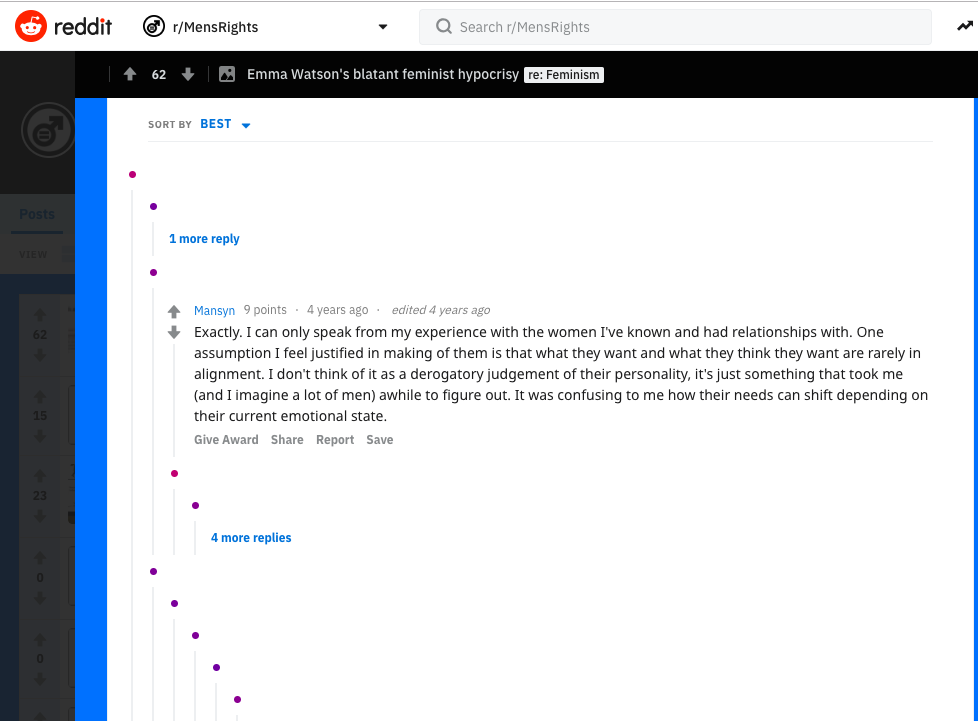

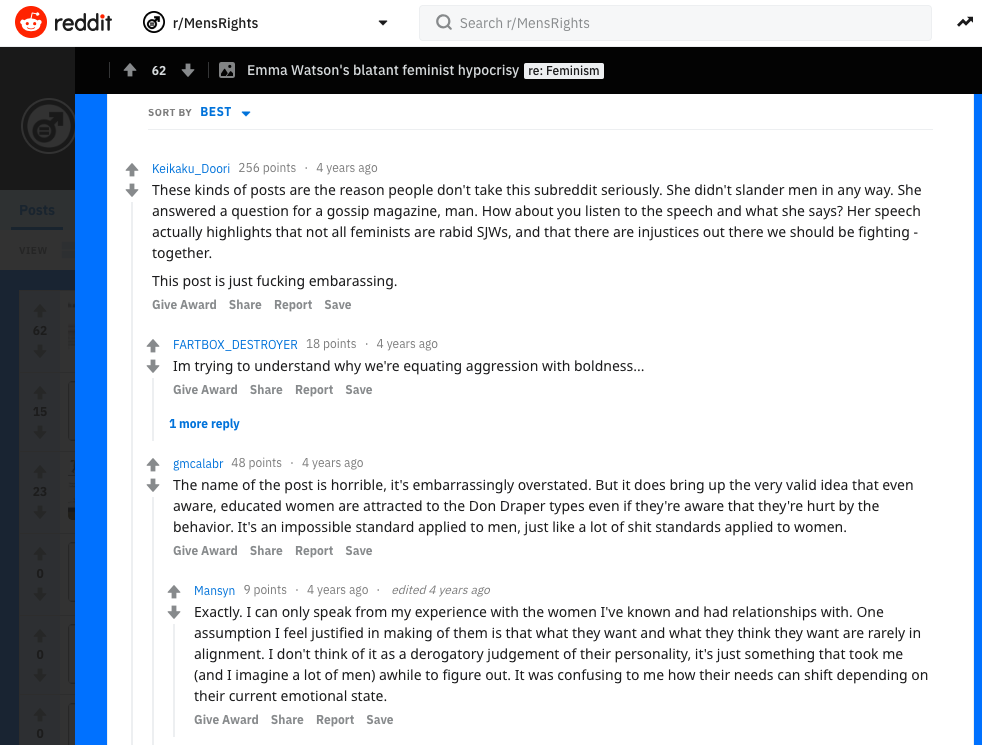

So, Tune succeeded in hiding comments, but was it hiding the right comments, the truly toxic ones? Turned back up to “blaring,” the redacted comments are pretty run-of-the-mill for r/Drama (mildly “toxic” for the subreddit), but Tune’s content filter seems to dislike curse words and slurs, especially—which a lot of comments on the subreddit contain. A lot of the comments that Tune blocked weren’t necessarily toxic, just sweary. Since Tune’s results on r/Drama were pretty mixed, I needed another subreddit to give it a test run. Over on r/MensRights, “a place for those who wish to discuss men's rights and the ways said rights are infringed upon,” Tune again censors the comments containing curse words on a thread titled “Emma Watson's blatant feminist hypocrisy,” even when the comments are condemning the questionable tone of the thread title.

Since Tune’s results on r/Drama were pretty mixed, I needed another subreddit to give it a test run. Over on r/MensRights, “a place for those who wish to discuss men's rights and the ways said rights are infringed upon,” Tune again censors the comments containing curse words on a thread titled “Emma Watson's blatant feminist hypocrisy,” even when the comments are condemning the questionable tone of the thread title.

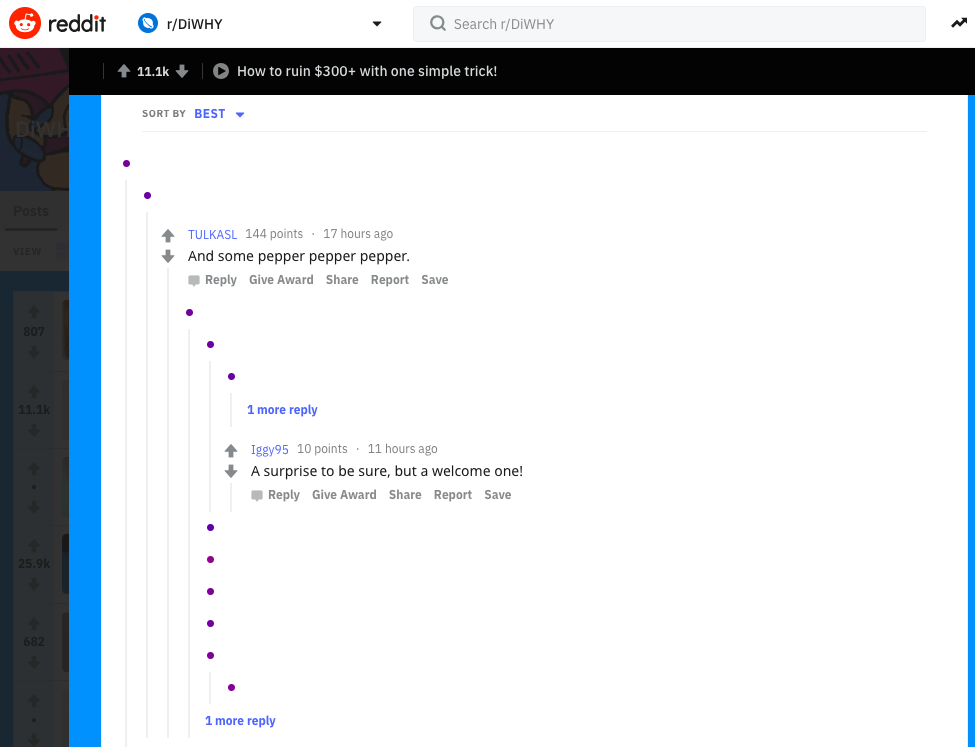

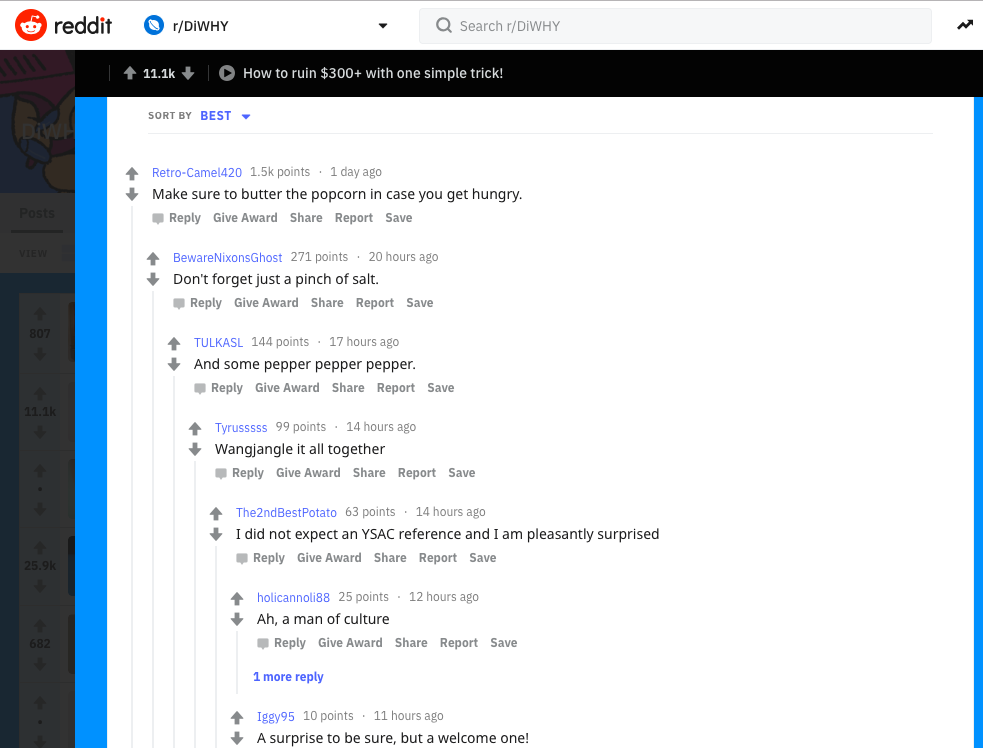

Most adults know that cursing isn’t necessarily an indicator of toxicity—strong language conveys strong feeling, positive or negative—but Tune’s filter doesn’t realize this.So, I tested Tune out on one of the most pure and good subreddits: r/DiWHY, which is devoted to posting the wackiest, most unnecessary DIY hack jobs users can find. In a thread about a horrible “packing hack,” Tune hides several comments for no reason that I can discern; they didn’t even contain any swearies. One hidden comment just said “pepper pepper pepper,” which, I guess that much pepper is literally toxic?

Most adults know that cursing isn’t necessarily an indicator of toxicity—strong language conveys strong feeling, positive or negative—but Tune’s filter doesn’t realize this.So, I tested Tune out on one of the most pure and good subreddits: r/DiWHY, which is devoted to posting the wackiest, most unnecessary DIY hack jobs users can find. In a thread about a horrible “packing hack,” Tune hides several comments for no reason that I can discern; they didn’t even contain any swearies. One hidden comment just said “pepper pepper pepper,” which, I guess that much pepper is literally toxic?

On my Twitter feed, the results of keeping Tune set to “quiet” were equally confusing. It decided that tweets promoting books and an image of some architecture were toxic, and censored those.The app is still in an “experimental” phase, according to its blog announcement post, so it’s hard to make a final judgement on whether this sort of thing would actually be useful to regular people using the internet. Even if it worked perfectly, I can’t imagine wilfully turning a blind eye on huge swaths of comments online—even in communities considered “toxic.”Right now, though, Tune shows that you can’t trust an algorithm to moderate speech. But that is how a lot of the internet works. Algorithmic moderation can be especially harmful if you’re a sex worker or sex educator, or other types of marginalized identities.Twitter often down-ranks sex workers’ tweets or hides their accounts from search, even when they follow Twitter’s rules. Instagram frequently bans accounts for being too adult, sometimes without explanation, and again, even when those accounts follow the rule or are for educational purposes. Apple's iOS 12 explicit content filter allowed white supremacist hate-speech and graphic violence to slip through, but blocked sex education results. Tumblr’s move to ban adult content last year, which initially used an algorithm to detect and hide NSFW posts, was a comically flawed effort that sanitized the platform of everything from nude sculptures to raw chicken.Twitter’s own system for hiding sensitive comment isn’t entirely different from what Tune does: A “hide replies” functionality announced in late February lets users hide content they don’t want to see, if a thread gets too toxic or harassment becomes a problem. In 2017, the platform started clustering “less relevant” replies at the bottom of threads, based on factors like the tweet’s content and the user’s number of followers.Censoring large sections of people’s voices out of your view is sometimes necessary, for example, when you’re being harassed or targeted by a troll campaign. But it’s a risky move to trust an algorithm to “turn down the volume” wholesale—even if not doing so means seeing more of the “mean stuff.”

On my Twitter feed, the results of keeping Tune set to “quiet” were equally confusing. It decided that tweets promoting books and an image of some architecture were toxic, and censored those.The app is still in an “experimental” phase, according to its blog announcement post, so it’s hard to make a final judgement on whether this sort of thing would actually be useful to regular people using the internet. Even if it worked perfectly, I can’t imagine wilfully turning a blind eye on huge swaths of comments online—even in communities considered “toxic.”Right now, though, Tune shows that you can’t trust an algorithm to moderate speech. But that is how a lot of the internet works. Algorithmic moderation can be especially harmful if you’re a sex worker or sex educator, or other types of marginalized identities.Twitter often down-ranks sex workers’ tweets or hides their accounts from search, even when they follow Twitter’s rules. Instagram frequently bans accounts for being too adult, sometimes without explanation, and again, even when those accounts follow the rule or are for educational purposes. Apple's iOS 12 explicit content filter allowed white supremacist hate-speech and graphic violence to slip through, but blocked sex education results. Tumblr’s move to ban adult content last year, which initially used an algorithm to detect and hide NSFW posts, was a comically flawed effort that sanitized the platform of everything from nude sculptures to raw chicken.Twitter’s own system for hiding sensitive comment isn’t entirely different from what Tune does: A “hide replies” functionality announced in late February lets users hide content they don’t want to see, if a thread gets too toxic or harassment becomes a problem. In 2017, the platform started clustering “less relevant” replies at the bottom of threads, based on factors like the tweet’s content and the user’s number of followers.Censoring large sections of people’s voices out of your view is sometimes necessary, for example, when you’re being harassed or targeted by a troll campaign. But it’s a risky move to trust an algorithm to “turn down the volume” wholesale—even if not doing so means seeing more of the “mean stuff.”

Advertisement

Advertisement

Advertisement