Illustration by Seth Laupus

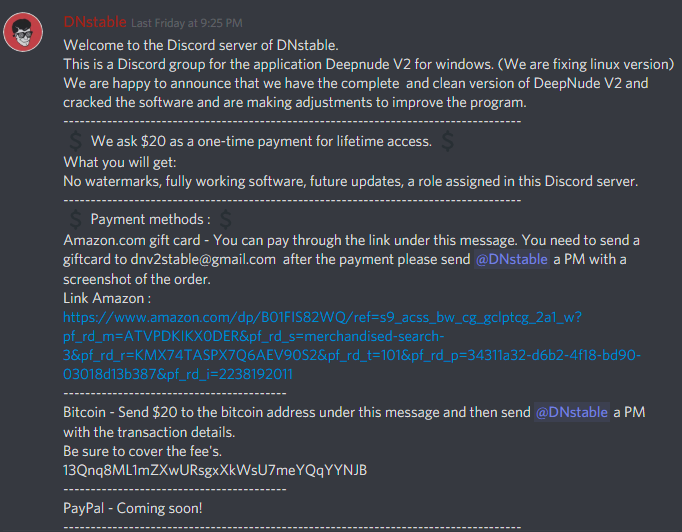

On Monday morning, chat platform Discord banned a server that was selling a version of DeepNude, a program that creates non-consensual nude images of women using machine learning algorithms.The server, which Motherboard accessed, included a link to download a version of the DeepNude software and instructions for how to pay the creators—who were asking for $20 donations to use the program—through Amazon gift cards or Bitcoin.It also included several algorithmically-generated nude images of women created with the program and discussions about how this version is new and improved over the original. Motherboard reported on the existence of DeepNude on June 26, after its creator started promoting the program on his website for $50. The following day, the programmer, who goes by Alberto, took the site and servers offline, and stated that any further use of his creation would be against the terms of use for his software.But since the program was available to download and use offline, it was easy to replicate once someone had it on their hard drive. From there, users could upload it elsewhere or share it in private forums—which is how it got to Discord.An invite link to the server was posted to a Twitter account devoted to bringing DeepNude back to life according to its bio, and advertised access to the application within the server.Twitter did not immediately respond to a request for comment. The account tweeted that although the server is banned, its organizers plan to launch a new website.When activity is flagged, Discord investigates and decides whether to take action. In this case, Discord found the server and its users in violation of the platform's community guidelines, which forbids sharing or linking to content "with intent to shame or degrade another individual," and promoting sexually explicit content without the subject's consent.“The sharing of non-consensual pornography is explicitly prohibited in our terms of service and community guidelines," a spokesperson for Discord told Motherboard. "We will investigate and take immediate action against any reported terms of service violation by a server or user. Non-consensual pornography warrants an instant shut down on the servers and ban of the users whenever we identify it, which is the action we took in this case.”This is a similar stance Discord took after people started using the platform to share datasets, results, and troubleshooting tips for deepfakes. Other social media platforms, including Twitter, Gfycat, and Reddit, took similar stands against algorithmically-generated non-consensual porn after Motherboard found evidence of it on their sites.Emanuel Maiberg contributed reporting to this article.

Motherboard reported on the existence of DeepNude on June 26, after its creator started promoting the program on his website for $50. The following day, the programmer, who goes by Alberto, took the site and servers offline, and stated that any further use of his creation would be against the terms of use for his software.But since the program was available to download and use offline, it was easy to replicate once someone had it on their hard drive. From there, users could upload it elsewhere or share it in private forums—which is how it got to Discord.An invite link to the server was posted to a Twitter account devoted to bringing DeepNude back to life according to its bio, and advertised access to the application within the server.Twitter did not immediately respond to a request for comment. The account tweeted that although the server is banned, its organizers plan to launch a new website.When activity is flagged, Discord investigates and decides whether to take action. In this case, Discord found the server and its users in violation of the platform's community guidelines, which forbids sharing or linking to content "with intent to shame or degrade another individual," and promoting sexually explicit content without the subject's consent.“The sharing of non-consensual pornography is explicitly prohibited in our terms of service and community guidelines," a spokesperson for Discord told Motherboard. "We will investigate and take immediate action against any reported terms of service violation by a server or user. Non-consensual pornography warrants an instant shut down on the servers and ban of the users whenever we identify it, which is the action we took in this case.”This is a similar stance Discord took after people started using the platform to share datasets, results, and troubleshooting tips for deepfakes. Other social media platforms, including Twitter, Gfycat, and Reddit, took similar stands against algorithmically-generated non-consensual porn after Motherboard found evidence of it on their sites.Emanuel Maiberg contributed reporting to this article.

Advertisement