For the most part, the first tasks to be outsourced to machines are the most routine, since these jobs are relatively easy to reduce to quantifiable parameters that can be used to train an algorithm. The upshot of this was that artists and other creative types can rest easy since quantifying creativity and aesthetics is much more difficult than, say, quantifying the rules of the road for a self-driving Uber.

Advertisement

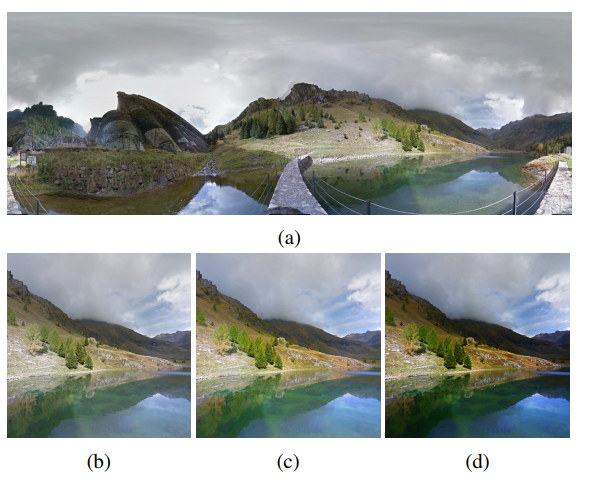

Nevertheless, AI researchers are hard at work tackling the problem of artificial creativity, and machines are slowly learning how to write poems, novels and even movie scripts. But according to a new paper by the Google researchers Hui Fang and Meng Zhang, professional photographers might be the next causalities of the creative AI revolution.Posted to arXiv earlier this month, the paper describes an artificial neural network called Creatism that is a "system for artistic content creation." The idea was to break down photo aesthetics into quantifiable parameters that could be taught to a machine using professional artistic examples. The result, as Fang and Zhang write in their paper, is an algorithm that "mimics the workflow of a landscape photographer, from framing for the best composition to carrying out various post-processing operations."To make this happen, Fang and Zhang first used formulas to define different aesthetic aspects of photography such as image saturation, composition, and the level of detail based on examples by professional photographers.Next, they used a collection of about 15,000 photo thumbnails of high-ranking landscape photography from 500px.com in order to teach their neural net how to crop and add lighting effects to these thumbnails to obtain the most aesthetically pleasing landscape photo. This process essentially involved having the neural net crop and add new lighting to the input photos, producing several different versions of the input. These photos were then evaluated by the researchers and four professional photographers to select the best version of the photo.

Advertisement

After the neural net had learned the parameters that defined a good crop and good photo lighting, it was time to allow it to 'take' its own photos. To do this, the researchers used a number of panoramas from Google Street View in scenic locations around North America, such as Banff, the Grand Canyon, Yellowstone, and Big Sur. These panoramas were then fed to the neural net which had to generate a unique photo based on this environment using its learned crop and lighting parameters.The final step of the process was a photographic Turing-test to determine whether people could tell that a given photo was generated by a machine.To do this, Fang and Zhang asked six professional photographers, all of whom had at least a bachelor's degree in photography and two years of professional photo experience, to independently evaluate the photos. Each photo would be ranked on a score from 1-4, where 1 indicated a beginner-level photo with little artistic merit and 4 indicated a professional photo. Importantly, none of the photographers were aware that any of the photos had been generated by a machine.

Amazingly, of the 173 photos evaluated by the professional photographers, 41 percent were ranked as at or above a semi-professional level (a score of 3 or greater), and 13 percent of the photos scored at or above 3.5. In comparison, 45 percent of actual professional photos received a score of 3.5 or higher.Read More: Is This Photo Real or Fake?

Advertisement

So while Google's Creatism algorithm might not be the next Ansel Adams, it could still give your best Instagram photos a run for their money. The researchers posted many of the machine generated photos to an album on Github, and a few have been included below.

Image: Google/arXiv

Image: Google/arXiv

Image: Google/arXiv

Image: Google/arXiv

Get six of our favorite Motherboard stories every day by signing up for our newsletter.