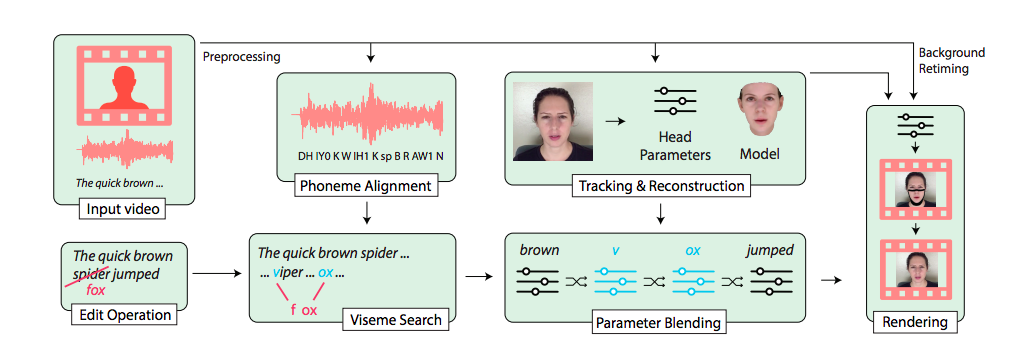

Image via Fried et. al

A research team consisting of machine learning experts from Stanford and Princeton Universities, as well as the Max Planck Institute for Informatics and Adobe, created a method for literally putting words in people's mouths, using just text inputs and a source video.By analyzing the speech patterns in a video of one person speaking into a camera, they're able to swap whole phrases for completely different ones. The result is pretty realistic.Their project, "Text-based Editing of Talking-head Video," was published online this week, as a whitepaper to be presented at the SIGGRAPH conference in July.In the video demonstration, a woman saying "one hundred and ninety one point four" is made to say "one hundred and eighty two point two" instead. A man reciting the Apocalypse Now quote "I love the smell of napalm in the morning," is made to seem like he's saying "I love the smell of french toast in the morning."To do this, the researchers identify and isolate the phonemes—tiny units of sound within speech—in the spoken phrase. They also create a 3D face model that tracks how the face and lips move with the words. Then, they find the visemes—sounds that look the same in how the lips move, such as "v" and "f"—and combine parts from the face model to match the new phrases. Someone saying "viper" and "-ox" in different parts of a video can be sliced up into a face model saying the word "fox."To make the edited voice match the rest of the person's speech, the researchers use VoCo, Adobe's voice-editing software which the company unveiled in 2016. If you watch closely and know what to look for (slightly stiff mouth movements, small inconsistencies in the motion of the mouth) you can tell where the new words are inserted. But without knowing where the edits occur, your eyes easily gloss over it.In terms of fact versus reality, this might be the most startling part of this processing technique. It's a small change, isolating the mouth and jawline and inserting only a few words within a longer sentence. In context, your eyes see what you want to see, filling in the rough spots to look seamless.To test how convincing their edited videos were, the researchers conducted a web-based study of 138 participants which them to view real and edited videos, and rate their response to the phrase “this video clip looks real to me" on a five-point scale. More than half of the participants—around 60 percent—rated the edited video clips as real. The unedited, "ground truth" videos were only rated to be "real" around 80 percent of the time.Since the beginning of algorithmically-altered face swap videos, or deepfakes, as a mainstream phenomenon, scholars have been sounding the alarms about what realistic, manipulated videos will do to society's concepts of truth and reality. This small user study shows that those concerns are valid, and already coming to life—when everything is fake, nothing is real.People are already easily duped when it comes to videos that confirm what they want to believe, such as we saw with the edited video of Nancy Pelosi. But when they're put on alert, confirmation bias swings the other direction, to total disbelief of what's actually real.In their whitepaper, the researchers on this text-swapping method commit several paragraphs to the ethical considerations of such technology. "The availability of such technology—at a quality that some might find indistinguishable from source material—also raises important and valid concerns about the potential for misuse," they write. "We acknowledge that bad actors might use such technologies to falsify personal statements and slander prominent individuals. We are concerned about such deception and misuse."They write that they hope bringing these methods to light will raise awareness of video editing techniques, so that people are more skeptical of what they see.

If you watch closely and know what to look for (slightly stiff mouth movements, small inconsistencies in the motion of the mouth) you can tell where the new words are inserted. But without knowing where the edits occur, your eyes easily gloss over it.In terms of fact versus reality, this might be the most startling part of this processing technique. It's a small change, isolating the mouth and jawline and inserting only a few words within a longer sentence. In context, your eyes see what you want to see, filling in the rough spots to look seamless.To test how convincing their edited videos were, the researchers conducted a web-based study of 138 participants which them to view real and edited videos, and rate their response to the phrase “this video clip looks real to me" on a five-point scale. More than half of the participants—around 60 percent—rated the edited video clips as real. The unedited, "ground truth" videos were only rated to be "real" around 80 percent of the time.Since the beginning of algorithmically-altered face swap videos, or deepfakes, as a mainstream phenomenon, scholars have been sounding the alarms about what realistic, manipulated videos will do to society's concepts of truth and reality. This small user study shows that those concerns are valid, and already coming to life—when everything is fake, nothing is real.People are already easily duped when it comes to videos that confirm what they want to believe, such as we saw with the edited video of Nancy Pelosi. But when they're put on alert, confirmation bias swings the other direction, to total disbelief of what's actually real.In their whitepaper, the researchers on this text-swapping method commit several paragraphs to the ethical considerations of such technology. "The availability of such technology—at a quality that some might find indistinguishable from source material—also raises important and valid concerns about the potential for misuse," they write. "We acknowledge that bad actors might use such technologies to falsify personal statements and slander prominent individuals. We are concerned about such deception and misuse."They write that they hope bringing these methods to light will raise awareness of video editing techniques, so that people are more skeptical of what they see.

Advertisement

Advertisement